Rebellions Rebel100 Review: A Promising UCIe Pioneer

Rebellions Rebel100 Review: A Promising UCIe Pioneer Verdict: The Rebellions Rebel100 stands out as an early adopter of UCIe-Advanced technology, offering a quad-chiplet AI accelerator that claims to match Nvidia H200

Rebellions Rebel100 Review: A Promising UCIe Pioneer

Verdict: The Rebellions Rebel100 stands out as an early adopter of UCIe-Advanced technology, offering a quad-chiplet AI accelerator that claims to match Nvidia H200 performance at a lower power envelope. Its innovative design, custom power delivery, and focus on seamless multi-chiplet operation make it a compelling solution for large-scale AI inference, particularly for those looking to leverage advanced packaging and power efficiency.

Introduction to a New Era of AI Acceleration

The landscape of AI acceleration is rapidly evolving, driven by an insatiable demand for performance that outpaces traditional foundry capabilities. Multi-chiplet designs have emerged as a critical solution, enabling developers to overcome yield challenges and scale performance by integrating multiple smaller dies into a single, powerful package. South Korean firm Rebellions has entered this competitive arena with its Rebel100, an AI inference accelerator that represents a significant step forward, being one of the industry's first to utilize the Unified Chiplet Interconnect Express (UCIe) standard for stitching together four chiplets.

Revealed at ISSCC 2026, the Rebel100 is not just about raw power; it's about intelligent design, aiming to balance performance, price, and throughput while pushing the boundaries of chiplet integration. It’s a testament to how specialized architectures can achieve impressive results against established industry giants.

Key Specifications and Innovative Design

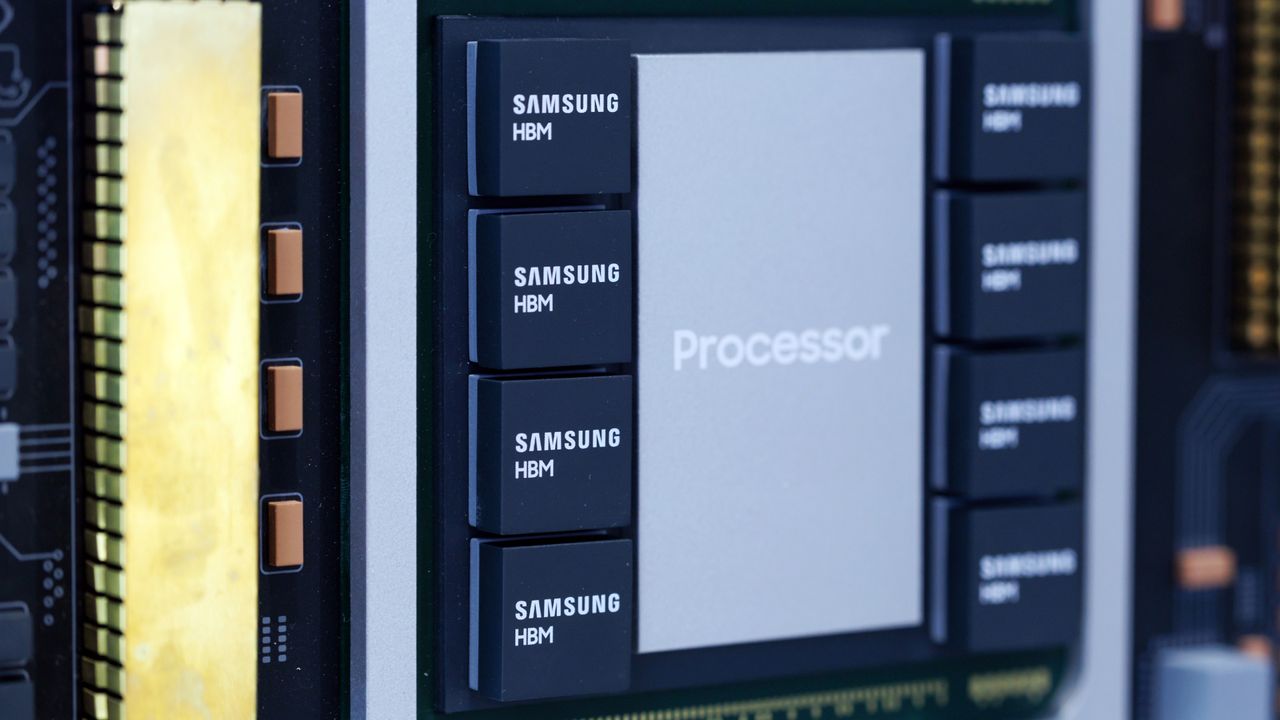

The Rebel100 is a system-in-package (SiP) composed of four distinct neural processing unit (NPU) dies, each measuring 320mm². These NPUs are fabricated using Samsung's performance-enhanced SF4X process technology and integrated using Samsung's I-CubeS advanced packaging method, which is comparable to CoWoS-S. This choice of smaller dies is strategic, enhancing manufacturing yield, especially given Samsung's pellicle-less EUV approach.

Each NPU die is paired with a 12Hi HBM3E memory stack, providing 36GB per die, culminating in a substantial 144GB of HBM3E memory per Rebel100 package. The chiplets are interconnected via a UCIe-Advanced die-to-die interface operating at 16Gbps, achieving an impressive aggregate bandwidth of 4 TB/s. Crucially, the interconnect boasts a low 11ns Flit-Aware Die-to-Die (FDI) latency, which is essential for making the four chiplets behave logically as a single, unified processor rather than discrete units. This is achieved through a mesh topology that expands the on-chip 2D Network-on-Chip (NoC) across the UCIe interface, simplifying software development.

For host connectivity, the Rebel100 employs two PCIe 5.x x16 interfaces, supporting SR-IOV and peer-to-peer operations. The system's power integrity is bolstered by four integrated silicon capacitor (ISC) dies, which also contribute to mechanical stability.

Performance Claims and Power Efficiency

Rebellions states that a single Rebel100 SiP can deliver 2 FP8 PFLOPS or 1 FP16 PFLOPS of performance without sparsity, all within a 600W power envelope. This is a significant claim, putting it directly in line with the performance offered by Nvidia's H200, which typically operates at 700W. The company also reports 56.8 TPS (transactions per second) on LLaMA v3.3 70B with single-batch 2k/2k input/output sequences. It's important to note, however, that these performance figures are provided by Rebellions itself and await independent verification.

The Rebel100 is envisioned as a foundational component for scalable AI infrastructure, supporting trillion-parameter models and million-token contexts across clusters ranging from dozens to tens of thousands of accelerators.

Seamless Multi-Chiplet Operation and Custom Innovations

To ensure the quad-chiplet design operates as a cohesive unit, Rebellions developed a sophisticated internal 2D mesh Network-on-Chip (NoC) that logically extends across the UCIe interconnects. This allows the entire SiP to function as a single large, mesh-connected processor, greatly simplifying software development.

While UCIe 1.0 supports optional protocols like CXL.io, CXL.mem, and CXL.cache, Rebellions chose to implement its own vendor-defined streaming and memory-semantics protocols. This decision allowed for a highly aggressive data-movement engine featuring a configurable DMA subsystem with eight execution engines per NPU die, capable of pulling data from local or remote HBM3E, or distributed shared memory at up to 2.6 TB/s per DMA. Task-level QoS controls are also implemented to prevent starvation and manage congestion.

Synchronization across the four chiplets is managed by dedicated hardware synchronization managers within each NPU, operating either centrally or autonomously. This architecture deliberately avoids direct peer-to-peer communications and inter-unit dependencies to minimize overhead and maximize utilization during LLM inference.

Unorthodox Power Delivery and Enhanced Reliability

The Rebel100's 600W TDP is subject to transient power surges that can momentarily double the nominal level, posing significant challenges for power integrity. Rebellions addresses this with a proprietary hardware staggering technique that offsets the start times of neural cores, smoothing current ramps and reducing supply noise. Dynamic instruction issue rate limits further mitigate sudden load changes.

Additionally, integrated silicon capacitor (ISC) dies are strategically placed to embed distributed capacitance across the VDD rails, supporting both the NPU and HBM3E PHY. This significantly dampens voltage oscillations and lowers impedance peaks, reinforcing the power delivery network against the demands of both compute and memory traffic.

For improved reliability in commercial deployments, Rebellions has added several features beyond standard UCIe functionality, including multiple loopback modes, transaction-level tracking, and channel-level diagnostics. A configurable switching mode allows a slight performance sacrifice for enhanced Mean Time Between Failures (MTBF) and Mean Time To Failure (MTTF), crucial for uptime in large AI clusters.

Pros and Cons

Pros:

- Pioneering UCIe-A Adoption: One of the first to implement quad-chiplet UCIe-Advanced interconnects, showcasing a path forward for multi-chiplet designs.

- Claimed Performance & Efficiency: Aims to match Nvidia H200 performance at a lower 600W TDP, offering excellent power efficiency.

- Seamless Multi-Chiplet Integration: Custom NoC and low-latency UCIe-A make the four chiplets behave as a single logical processor, simplifying software.

- Advanced Power Delivery: Proprietary hardware staggering and integrated silicon capacitors effectively manage transient power demands.

- Robust Reliability Features: Custom diagnostics and configurable reliability modes are valuable for enterprise deployments.

- Optimized for Yield: Leverages smaller, easier-to-manufacture 320mm² dies using Samsung's process and packaging.

Cons:

- Unverified Performance Claims: All performance metrics are vendor-supplied and await independent validation.

- Higher Silicon Footprint: Achieves performance with four chiplets, which implies a considerably higher overall silicon consumption in the package compared to a hypothetical single, highly optimized monolithic die of equivalent performance (though this approach yields benefits).

- Proprietary Protocols: Relies on vendor-defined streaming and memory-semantics protocols instead of fully adopting CXL-based UCIe 1.0 mappings, potentially impacting broader ecosystem compatibility.

- New Entrant: As a new player, ecosystem support and market penetration are still unproven compared to established alternatives.

Rebellions Rebel100 vs. Nvidia H200 Comparison

| Feature | Rebellions Rebel100 | Nvidia H200 (as referenced) |

|---|---|---|

| Architecture | Quad-chiplet (4x 320mm² NPU dies) | Monolithic (typically) |

| Interconnect | UCIe-Advanced die-to-die (mesh topology) | Proprietary (e.g., NVLink) |

| Memory | 144GB HBM3E (4x 36GB stacks) | Referenced as having high-bandwidth memory |

| Performance | 2 FP8 PFLOPS / 1 FP16 PFLOPS (no sparsity) | Comparable 2 FP8 PFLOPS / 1 FP16 PFLOPS |

| TDP (Power) | 600W | 700W |

| Process Tech | Samsung SF4X | Not specified in source (HBM3E implies advanced) |

Note: H200 details are based on comparisons made within the source content, not external knowledge.

Buying Recommendation

The Rebellions Rebel100 presents a compelling value proposition for data centers, cloud providers, and enterprises heavily invested in large language model inference, especially those prioritizing power efficiency and interested in cutting-edge packaging technologies. If independent verification confirms Rebellions' performance and power claims, the Rebel100 could be a strong contender, offering similar raw performance to Nvidia H200 with a lower operational cost due to reduced power consumption. Its innovative multi-chiplet approach, robust power delivery, and focus on reliability make it suitable for scaling out large AI clusters. However, potential buyers should weigh the benefits of its custom architecture and early adoption of UCIe against the maturity and broader ecosystem support of established solutions. Early adopters and partners looking to build next-generation AI infrastructure will find the Rebel100 particularly attractive.

FAQ

Q: What makes the Rebel100's design unique?

A: The Rebel100 is unique for being one of the first multi-chiplet AI accelerators to use UCIe-Advanced interconnects to combine four NPUs into a single package. It also features a custom internal NoC that logically treats the four chiplets as one processor, along with proprietary hardware staggering for power delivery and integrated silicon capacitors for voltage stability.

Q: How does Rebel100 compare in performance to Nvidia H200?

A: Rebellions claims the Rebel100 can achieve 2 FP8 PFLOPS or 1 FP16 PFLOPS without sparsity, which is stated to be in line with what Nvidia's H200 delivers. Crucially, the Rebel100 achieves this at a lower power envelope of 600W compared to the H200's 700W, offering better power efficiency, though these claims await independent verification.

Q: What are the key advantages of using UCIe in Rebel100?

A: The primary advantage of UCIe-Advanced in the Rebel100 is enabling high-bandwidth (4 TB/s aggregated) and low-latency (11ns FDI-to-FDI) interconnection between the four chiplets. This allows the system-in-package to behave as a single, unified processor, simplifying software development and maximizing performance in a multi-die configuration.

Related articles

D&D's Dungeon Masters Finale Trailer Drops: Ravenloft Showdown Looms

D&D's official actual play show, Dungeon Masters, is gearing up for its epic Ravenloft campaign finale. An exclusive animated trailer teases the party's ultimate confrontation with the legendary Lord Soth, promising a nail-biting conclusion to their perilous journey through the Realm of Dread.

INIU SnapGo Air 10000mAh Review: Slim, Fast, and Seamless Magnetic

Our INIU SnapGo Air 10000mAh review delves into this Qi2.2 magnetic power bank. It’s remarkably slim, offers rapid 25W wireless and 45W wired charging, and seamlessly integrates into daily use, promising to end slow charging woes.

Bean's Inceptin Receptor Bio-Defense: A Promising Natural Shield

Quick Verdict Imagine a plant that not only detects when it's being eaten but actively calls in aerial reinforcements to deal with the threat. That's essentially what researchers have uncovered in common bean plants.

8 ChatGPT Tricks: Unlock Your AI's Full Potential

Quick Verdict For anyone looking to move beyond basic queries with ChatGPT, the "8 ChatGPT tricks" guide by Android Authority serves as an invaluable roadmap. It highlights a collection of practical habits that

MTD Quarterly Reporting: A Stress Test for UK Tax Tech

Verdict: Ambitious but Risky Transformation HMRC’s Making Tax Digital (MTD) for Income Tax represents one of the UK government's most significant digital transformation projects to date. Its move to mandatory quarterly

Google's Android Safety Features for Kids: A Welcome Update

Google is bringing vital Personal Safety app features like lock screen emergency info and car crash detection to kids' Android phones, plus Safety Check and real-time location sharing for teens. This significant June Android Drop update offers much-needed peace of mind for parents.