IndexCache Speeds Long-Context AI Models by 1.82x

IndexCache, a novel sparse attention optimizer by Tsinghua University and Z.ai, dramatically accelerates long-context AI models. It cuts up to 75% redundant computation, delivering up to 1.82x faster inference and significant cost savings.

Researchers from Tsinghua University and Z.ai have unveiled IndexCache, a novel sparse attention optimizer designed to dramatically accelerate long-context artificial intelligence models. This new technique slashes redundant computation by up to 75% in models utilizing the DeepSeek Sparse Attention (DSA) architecture, yielding impressive performance gains. Initial tests demonstrate up to 1.82x faster time-to-first-token and 1.48x quicker generation throughput, particularly for processing prompts up to 200,000 tokens long. This innovation promises to make demanding AI applications more responsive and cost-effective for enterprises.

Addressing the DSA Bottleneck

Large Language Models (LLMs) fundamentally rely on the self-attention mechanism, which calculates relationships between every token in a sequence. However, this process scales quadratically with context length, leading to significant computational and memory costs for extensive tasks like document analysis or multi-step agentic workflows. Sparse attention, as implemented in architectures like DeepSeek Sparse Attention (DSA), addresses this by having queries attend to only the most relevant subset of tokens, transforming core attention from quadratic to linear complexity.

Despite DSA's efficiency gains, a critical bottleneck remained. The "lightning indexer module" within DSA, responsible for selecting these relevant tokens, still operates with quadratic complexity at each layer. As context lengths expand, the computational load from these indexers skyrockets, particularly during the initial prompt processing, known as the "prefill" stage. This "indexer tax" substantially hindered the overall model speed.

IndexCache's Innovative Solution

The research team pinpointed a key inefficiency: the selected important tokens often remain consistent across consecutive transformer layers in DSA models, with empirical data showing 70% to 100% overlap. IndexCache capitalizes on this cross-layer redundancy by partitioning the model's layers into "full" (F) and "shared" (S) categories.

In this innovative design, only a few F layers actively calculate and cache fresh token indices. The majority, the S layers, bypass this intensive computation entirely, instead reusing the cached indices from the nearest preceding F layer. This approach directly targets the compute bottleneck of the indexers, differing from traditional KV cache compression techniques which focus on memory footprint. According to Yushi Bai, co-author of the paper, IndexCache is complementary to existing methods and can be combined with them.

Flexible Deployment and Training

IndexCache offers two primary deployment strategies. For developers working with pre-existing DSA models, a training-free method uses a "greedy layer selection" algorithm. This algorithm, calibrated with a small dataset, intelligently identifies optimal F and S layer placements, enabling up to 75% of indexers to be safely removed without compromising performance.

For teams building or extensively fine-tuning foundation models, a training-aware approach integrates a "multi-layer distillation loss" during training. This method trains indexers to select a consensus set of tokens relevant for multiple subsequent layers, inherently optimizing the network for cross-layer sharing. IndexCache is currently applicable to models built on the DSA architecture, including the latest DeepSeek and GLM families.

Real-World Performance Gains

Extensive evaluations on the 30-billion-parameter GLM-4.7 Flash model demonstrated significant real-world speedups. At a context length of 200,000 tokens, IndexCache, with 75% of indexers eliminated, reduced prefill latency from 19.5 seconds to 10.7 seconds, a 1.82x acceleration. During the decoding phase, per-request throughput jumped by 1.48x, from 58 to 86 tokens per second. Under full server load, total decode throughput saw an increase of up to 51%.

These efficiency gains directly translate into reduced operational costs for enterprises. Bai noted that long-context workloads such as Retrieval Augmented Generation (RAG), document analysis, and agentic pipelines could see approximately a 20% reduction in deployment costs and improved user-perceived latency. For shorter contexts, benefits average around 5%.

Crucially, these performance enhancements did not compromise reasoning capabilities. The training-free IndexCache-optimized 30B model maintained an average score of 49.9 on long-context benchmarks, nearly matching the original baseline's 50.2. It even surpassed the baseline on the complex AIME 2025 math reasoning benchmark, scoring 92.6 compared to 91.0. Preliminary tests on the massive 744-billion-parameter GLM-5 model also showed at least a 1.3x speedup on contexts over 100K while maintaining quality.

Future Outlook and Accessibility

Implementing the training-free IndexCache approach requires a calibration dataset that reflects real-world domain-specific workloads to optimize layer sharing patterns. Once calibrated, deployment is straightforward, with open-source patches already available on GitHub for popular inference engines like vLLM and SGLang.

Yushi Bai emphasizes that IndexCache's underlying philosophy signals a shift in AI model design. "Future foundation models will likely be architected with downstream inference constraints in mind from the beginning," he stated, suggesting a move towards designs inherently optimized for throughput and latency, rather than treating these as afterthoughts.

FAQ

Q: Which AI models can benefit from IndexCache?

A: IndexCache is specifically designed for models that utilize the DeepSeek Sparse Attention (DSA) architecture, including the latest DeepSeek models and the GLM family of models.

Q: How much faster can IndexCache make AI inference?

A: Depending on the context length and specific model, IndexCache can deliver significant speedups. For a 200,000-token context, it achieved up to 1.82x faster time-to-first-token and 1.48x faster generation throughput in tests, with preliminary results showing at least 1.3x speedup on very large models like GLM-5.

Q: Does IndexCache reduce the quality or accuracy of AI model outputs?

A: No, the research indicates that IndexCache maintains or even slightly improves reasoning capabilities. Tests showed the optimized models matched or outperformed baselines on various long-context benchmarks and math reasoning tasks.

Related articles

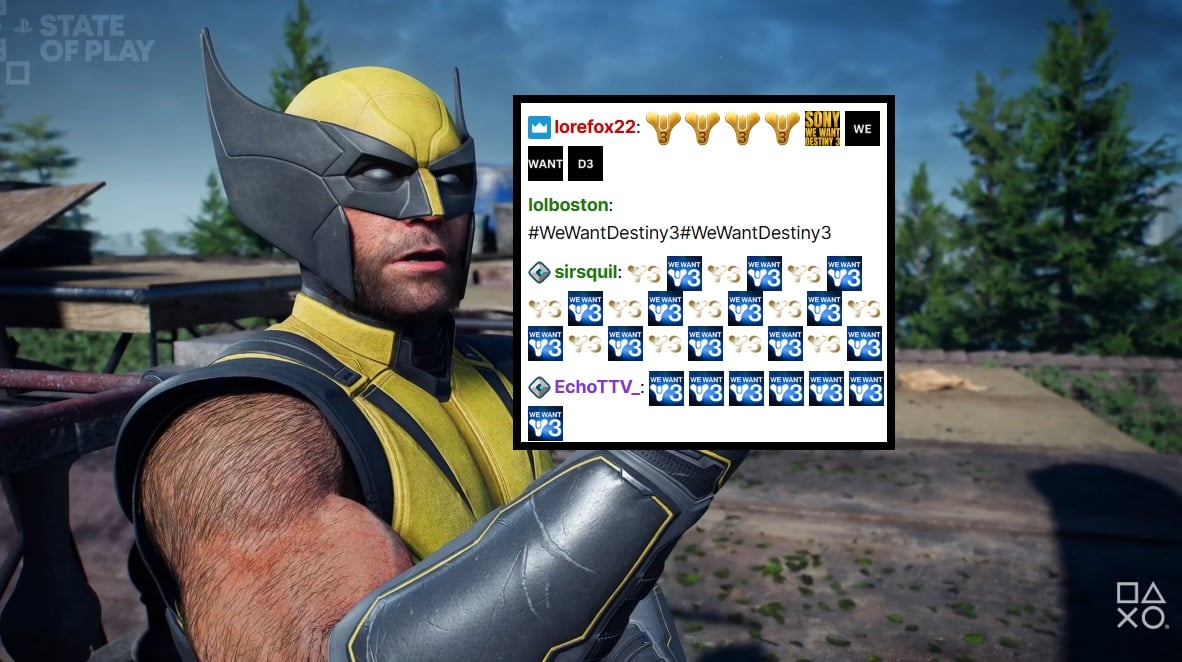

PlayStation Showcase Chat Swamped by Demands for Destiny 3

PlayStation's recent State of Play showcase was largely overshadowed by an impassioned fan campaign in the Twitch chat, demanding 'Destiny 3'. Amidst reveals for new PS5 games, the chat was relentlessly spammed with #WeWantDestiny3, fueled by the unexpected sunsetting of Destiny 2 and the reported absence of a direct sequel. This digital protest reflects widespread community frustration, amplified by a popular streamer and a petition with over 330,000 signatures.

Microsoft Unveils ASSERT, Simplifying AI Behavior Testing with Text

Microsoft has launched ASSERT, an open-source framework designed to simplify AI behavior testing. It enables developers to create comprehensive, application-specific evaluations using natural language descriptions, ensuring AI systems act as intended for particular products and services. The tool translates high-level goals into structured tests, generates scenarios, scores results, and logs execution paths.

Trump Orders Voluntary AI Model Review Before Release

President Trump has signed an executive order creating a voluntary framework for AI companies to share advanced models with the federal government before release. This initiative aims to bolster secure innovation and protect critical infrastructure, reflecting a shift from the administration's previous hands-off approach to AI safety. Companies opting for pre-release review may receive confidentiality protections.

Quick Share Meets AirDrop: A Welcome Cross-Platform Step

Quick Verdict: A Much-Anticipated Bridge For years, seamless file sharing between Android and iOS devices has been a frustrating chasm, often requiring clunky workarounds or third-party apps. This month, Google is

Blue Origin's New Glenn Explosion: Key Components Survive, 2026

Blue Origin announced that critical fuel tanks and key launch pad components survived last week's New Glenn rocket explosion, paving a faster path back to flight. CEO Dave Limp pledges a return to orbital missions before year-end, which is crucial for NASA's Artemis lunar program to maintain its tight schedule for crewed landings.

ZeroDrift raises $10M to protect AI models from themselves: AI

ZeroDrift, an AI compliance startup, has secured $10 million in seed funding from investors like a16z Speedrun. The company's service acts as a crucial intermediary, detecting compliance violations in AI-generated messages and rewriting them to meet regulatory standards like SOC 2 and GDPR. This rapid, oversubscribed funding round highlights the urgent demand for robust AI governance solutions as businesses scale AI adoption.