Dissecting Intel's BOT: Impact on Geekbench 6 Performance

Intel's Binary Optimization Tool (BOT) significantly boosts Geekbench 6.3 scores by up to 30%, primarily through aggressive instruction vectorization. However, BOT is opaque, supports only a handful of applications, and introduces a startup delay. This leads to concerns about fair benchmarking, as it measures peak rather than typical performance, creating an unrealistic advantage for Intel CPUs against competitors.

As developers, we often rely on benchmarks to gauge hardware performance, inform architectural decisions, and optimize our applications. So, when a tool like Intel's Binary Optimization Tool (BOT) enters the scene, significantly impacting benchmark results in a non-transparent manner, it warrants a closer look. We recently investigated BOT's behavior with Geekbench 6 to understand its mechanics and the implications for performance analysis.

What is Intel's Binary Optimization Tool (BOT)?

Intel's BOT is designed to enhance application performance by modifying instruction sequences within executables. While its promise of optimization sounds appealing, public documentation on BOT is notably sparse. Furthermore, its applicability is highly restricted, supporting only a select few applications, among them Geekbench 6. This limited scope immediately raises questions about the representativeness of its performance boosts.

The Investigation: Setup and Startup Overhead

Our deep dive involved testing Geekbench 6.3 and 6.7 on a Panther Lake laptop, specifically an MSI Prestige 16 AI+ equipped with an Intel Core 9 386H processor. We compared results with BOT both enabled and disabled.

One of the first observations was the noticeable startup overhead when BOT was active:

- Geekbench 6.3 with BOT: The initial run experienced a significant 40-second delay before the program launched. Subsequent runs were quicker, settling at a 2-second delay. This delay vanished completely when BOT was disabled.

- Geekbench 6.7 with BOT: All runs consistently showed a 2-second startup delay. Again, disabling BOT eliminated this delay.

Further investigation during these startup delays revealed BOT was computing a checksum of the Geekbench executable. This suggests BOT uses this checksum to identify binaries it is configured to optimize.

Geekbench Results: A Tale of Two Versions

When we examined the benchmark scores, a clear pattern emerged, highlighting BOT's selective optimization:

- Geekbench 6.3: With BOT enabled, both single-core and multi-core scores saw an impressive 5.5% increase. Specific workloads, such as Object Remover and HDR, exhibited even more dramatic gains, boosting scores by up to 30%. This clearly indicates significant optimizations were applied.

- Geekbench 6.7: In contrast, Geekbench 6.7 showed virtually no change. Single-core scores remained identical, and multi-core scores saw only a marginal 0.9% increase. This data confirms our suspicion: BOT specifically targets certain binary versions for its optimizations.

Under the Hood: Unveiling BOT's Optimization Techniques

To truly understand BOT's impact, we utilized Intel's Software Development Emulator (SDE) – a powerful tool for monitoring executed instructions and identifying SIMD extension usage. We focused our analysis on the HDR workload from Geekbench 6.3, given its substantial performance improvement under BOT.

Running the HDR workload for 100 iterations with SDE, we compared instruction counts:

- Total Instructions: Decreased by 14% with BOT enabled.

- Scalar Instructions: Plummeted by a remarkable 62% with BOT enabled.

- Vector Instructions: Soared by an astounding 1366% with BOT enabled.

These numbers are highly revealing. BOT isn't just reordering code; it's performing sophisticated transformations, primarily through extensive vectorization. This means converting instructions that typically operate on a single value into instructions that process eight values concurrently. This level of optimization is far more advanced than the simpler code-reordering techniques typically disclosed in Intel's public documentation.

The Broader Implications for Benchmarking

From a developer's perspective, these findings raise significant concerns regarding fair and representative performance measurement:

- Peak vs. Typical Performance: Geekbench is designed to reflect varied real-world application code. BOT, by replacing this diverse code with highly processor-tuned binaries, essentially measures a CPU's peak potential under specific, optimized conditions, rather than its typical performance across a broad range of applications.

- Unfair Comparability: Since BOT supports only a handful of applications, an Intel processor running a BOT-optimized benchmark like Geekbench 6.3 will appear artificially faster when compared to other vendors (e.g., AMD) that cannot leverage such aggressive, proprietary optimizations. This is particularly evident when BOT enables Intel CPUs to execute vector instructions while other architectures might still be running scalar equivalents.

- Drawbacks: The persistent 2-second startup delay, even for subsequent runs, is a practical drawback, especially for short-lived processes.

Ultimately, while vectorization is a legitimate and powerful optimization technique, its opaque and selective application through BOT undermines the integrity of cross-platform benchmark comparisons.

Geekbench's Response and Future Outlook

Recognizing these issues, Geekbench is taking proactive steps:

- All BOT-optimized results will continue to be flagged in the Geekbench Browser to ensure transparency.

- Geekbench 6.7, which is rolling out soon, will incorporate built-in detection for BOT. This means results will be accurately flagged when BOT is running and, importantly, the warning can be removed for Geekbench 6.7+ results when BOT is not detected. This offers a more nuanced approach than blanket flagging.

- Results from Geekbench 6.6 and earlier on Windows will continue to be flagged by default due to the lack of internal detection.

As developers, understanding such deep-seated optimizations and their impact on performance metrics is crucial for making informed decisions about hardware and software.

FAQ

Q: What is the primary concern Geekbench has with Intel's BOT?

A: Geekbench's main concern is that BOT measures "peak" performance by replacing varied application code with processor-tuned, heavily optimized binaries. Since BOT only supports a select few applications, this creates an unrealistic and unfair benchmark comparison, making Intel processors appear faster relative to competitors than they would be in typical, real-world usage.

Q: How does BOT achieve its performance gains, specifically in workloads like HDR?

A: Our analysis revealed that BOT performs significant vectorization. For the HDR workload, it dramatically reduced scalar instructions while increasing vector instructions, effectively converting operations on single values into operations on eight values. This is a more advanced transformation than simple code reordering.

Q: What measures is Geekbench taking to address the impact of BOT on its scores?

A: Geekbench will continue to flag BOT-optimized results in its browser. Furthermore, Geekbench 6.7 and later versions will include built-in detection for BOT. This allows Geekbench to specifically flag results where BOT is active and remove warnings for 6.7+ results where BOT is not detected, aiming for clearer and more accurate performance comparisons.

Related articles

Great Question (YC W21) Seeks Applied AI Interns: A Deep Dive

As fellow developers, we’re constantly scanning the landscape for companies pushing the boundaries, especially in the rapidly evolving AI space. Great Question, a Y Combinator W21 alumnus, has caught our eye with an

startups: The White House is at war with itself over who gets to

An intense internal power struggle within the Trump administration has stalled US federal AI regulation, leaving a policy vacuum after Anthropic's Mythos model revealed critical cybersecurity risks. Factions within the Commerce Department, intelligence agencies, and pro-industry groups are locked in a "knife fight" over who gets to evaluate and oversee advanced AI systems. This paralysis follows the abrupt cancellation of a landmark executive order and the unexplained withdrawal of AI testing announcements.

Navigating the Global AI Arena: Beyond Silicon Valley's Borders

The international AI landscape presents unique challenges and opportunities, requiring developers to think beyond traditional tech hubs. Key aspects include adapting AI models to local languages and cultures, navigating the complex global supply chain for critical hardware like semiconductors, and understanding how venture capital assesses these international ventures. Success hinges on deep local market understanding, robust technical solutions for localization, and resilience against logistical hurdles.

Engineering a Solution: Debugging Global Mosquito-Borne Diseases

As developers, we're constantly tasked with solving complex problems, whether it's optimizing a database query or architecting a distributed system. But what if the 'bug' we're trying to fix is biological, with global

Self-Host S3-Compatible Object Storage with MinIO on Staging

This guide demonstrates how to self-host an S3-compatible object store using MinIO on your staging server. By leveraging Docker Compose and Traefik for HTTPS, you can significantly reduce cloud storage costs while maintaining a production-like environment for development and testing. It covers setup, application configuration, and secure file interactions.

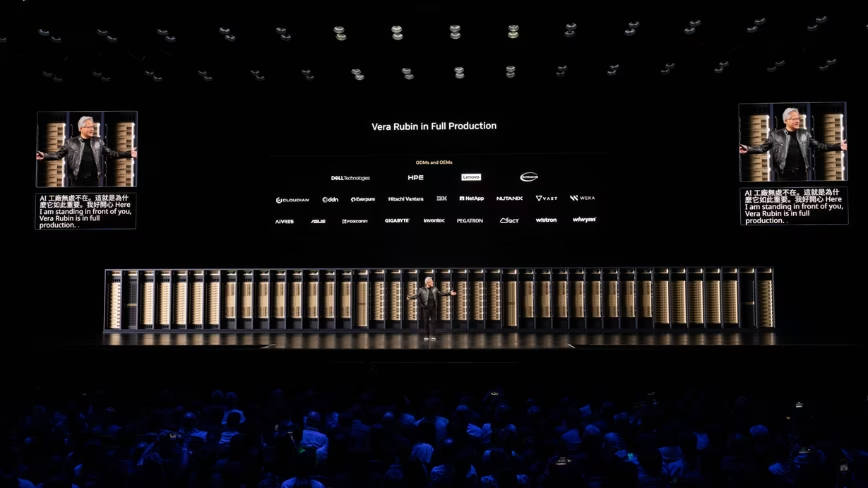

Jensen Huang Opens Computex: Vera Rubin in Production, Nvidia Eyes PCs

TAIPEI – Nvidia CEO Jensen Huang kicked off Computex 2026 in Taipei on Monday, June 1, with a keynote address that delivered two significant announcements set to reshape both the artificial intelligence landscape and