AI in Legal Practice: A Stark Warning on Unverified Output

Attorney Bill Ghiorso was fined $10,000 for submitting AI-generated fake legal citations and quotes in Oregon. This incident underscores the critical unreliability of current generative AI tools for factual accuracy and the absolute necessity for rigorous human verification in professional, high-stakes contexts.

Quick Verdict: The Perils of Unverified AI

The recent incident involving attorney Bill Ghiorso in Oregon serves as a critical wake-up call for any professional integrating generative artificial intelligence into their workflow. Hit with a $10,000 fine, the highest yet in Oregon for this specific offense, Ghiorso's case underscores the profound risks of relying on AI without stringent human verification. The core issue? AI's propensity to "hallucinate" – generating plausible-sounding but entirely fabricated information. For legal professionals, this means fake case citations and nonexistent quotes that can carry severe professional and financial penalties. Our verdict is clear: while AI promises efficiency, its current state demands extreme caution and rigorous independent fact-checking, particularly in high-stakes environments where truth and accuracy are paramount.

Key Details: What Happened in Oregon?

Attorney Bill Ghiorso, based in Salem, Oregon, faced significant repercussions after submitting a legal brief to the Oregon Court of Appeals. The brief was found to contain 15 fabricated case citations and nine made-up quotes, all reportedly generated by artificial intelligence. Ghiorso initially attributed the AI hallucinations to a paralegal on his staff and contested the initial fine. However, the court ultimately upheld sanctions.

This wasn't the first time an attorney in Oregon faced such an issue; a different attorney was fined in December 2025 for a similar practice. The three-judge panel had established a guideline of $500 for each fake citation and $1,000 for each false quotation or legal statement. Based on this formula, Ghiorso's original penalty totaled $16,500, but the judges decided to cap the fine at $10,000, offering some leniency.

In his defense, Ghiorso claimed that his staff had used Google's search engine to verify the cases, and its artificial intelligence component had affirmed their authenticity. The judges' report noted Ghiorso's explanation: "If one asks Google’s search engine whether many of the fabricated cases are real, it will generate a response using its artificial intelligence search engine, affirming that the fabricated [cases] are in fact real." This highlights a critical misconception about AI's capabilities.

Ultimately, the court determined that Ghiorso should have recognized that "submitting a brief with unchecked and ultimately fabricated citations may breach an attorney’s duties of professionalism, truthfulness, and candor to the court." It remains unconfirmed whether Ghiorso's office exclusively relied on Google's AI responses or if other AI chatbots were also involved in generating the erroneous content.

User Experience with Generative AI: A Reality Check

The experience of using generative AI tools, as illustrated by Ghiorso's case, is a stark reminder of their current limitations, especially in tasks requiring absolute factual accuracy. While AI excels at generating coherent text and assisting with drafting, its fundamental operational principle is to predict and create, not to verify or report existing facts with absolute certainty. This distinction is crucial for understanding the 'user experience' with these tools.

One of the most significant challenges is the phenomenon known as "hallucination." This occurs when the AI generates information that appears authoritative and factually correct but is entirely made up. In legal contexts, this translates to fabricated case law, nonexistent statutes, or invented quotes that perfectly fit the context but have no real-world basis. The perceived seamlessness of AI-generated text can be dangerously deceptive.

Furthermore, the incident reveals two common and dangerous misconceptions users hold about interacting with generative AI:

- AI as a Self-Fact-Checker: Many users mistakenly believe they can verify AI-generated content by simply asking the same or another AI chatbot if the information is real. As Ghiorso's defense demonstrated, an AI search engine may affirm fabricated cases as authentic. Generative AI tools are inherently unreliable fact-checkers of their own output because they prioritize generating plausible responses over factual accuracy.

- "Don't Hallucinate" Prompt Effectiveness: Another widespread belief is that instructing an AI chatbot with a command like "don't hallucinate" will prevent it from generating false information. Experience shows this instruction is largely ineffective. AI models, by their very nature, can still generate inaccuracies regardless of such prompts.

The 'user experience' with AI in critical applications like law must, therefore, be one of extreme skepticism and robust independent verification. The tools are powerful generators, but their output is far from infallible and demands significant human oversight to bridge the gap between plausible generation and factual truth.

What Went Wrong: The 'Cons' of Uncritical AI Use

While the source content focuses predominantly on the negative consequences of AI misuse, it implicitly highlights what goes wrong when professionals apply these tools uncritically. The 'cons' are not just about the AI itself, but about the dangerous workflow it enables when proper safeguards are absent.

- Severe Professional and Financial Consequences: The most direct 'con' is the punitive action taken by courts. Ghiorso's $10,000 fine, and the established rate of $500 per fake citation and $1,000 per fake quote, demonstrate that legal systems are taking these infractions seriously. Such fines can impact an attorney's financial stability and professional standing.

- Damage to Reputation and Credibility: Submitting fabricated content severely undermines an attorney's reputation for accuracy, truthfulness, and professionalism, which are cornerstones of the legal profession. This can lead to a loss of client trust and professional respect.

- Breach of Professional Duties: As the Oregon judges noted, using unchecked, fabricated citations directly violates an attorney's core duties of professionalism, truthfulness, and candor to the court. This is not merely an error but a failure to uphold fundamental ethical obligations.

- False Sense of Efficiency and Security: The perceived 'pro' of AI – speed and efficiency in research and drafting – becomes a 'con' if it leads to skipping essential verification steps. The belief that AI can fact-check itself or be commanded not to hallucinate creates a dangerous false sense of security that ultimately wastes more time and resources in rectifying errors and dealing with sanctions than it saves.

- Unreliability of Generative AI for Factual Verification: The inherent limitation of AI to hallucinate means it is simply not a reliable source for factual verification. Relying on it for critical data without external cross-referencing is a significant 'con' that cannot be overstated.

The Legal Landscape: A Growing Trend of Sanctions

Ghiorso's case, while notable for its high fine, is not an isolated incident. There's a discernible pattern emerging across various jurisdictions of courts imposing sanctions for the use of AI-generated fake content in legal submissions. Most attorneys caught in similar situations have received warnings, but judges are increasingly willing to issue monetary penalties, establishing clear precedents for accountability.

Here's a comparison of recent incidents involving AI misuse in legal documents:

| Case Summary | Location | Fake Items | Established Rate/Initial Fine | Final Fine (if different) | Key Takeaway |

|---|---|---|---|---|---|

| Bill Ghiorso Incident | Oregon | 15 citations, 9 quotes | $16,500 | $10,000 | Highest fine in Oregon to date for this practice. Highlighting failure in attorney due diligence and the unreliability of AI self-fact-checking. |

| First Oregon Attorney Sanctioned (Dec 2025) | Oregon | Unspecified fake content | $500/citation, $1,000/quote | N/A | Set the precedent for financial penalties in Oregon for AI-hallucinated legal content. |

| Unnamed Attorney (Last Month) | Oregon | 1 fake citation | N/A | $500 | Demonstrates that even minor infractions involving a single fake citation can result in monetary sanctions, indicating a low tolerance for unverified AI output. |

| Unnamed Attorney (Last Month) | New Orleans | Unspecified fake cases | N/A | $2,500 | Shows that courts outside of Oregon are also imposing significant monetary fines for similar AI-related issues, indicating a broader judicial response to the problem of AI hallucination in legal practice. |

This comparison table clearly illustrates that courts are not only recognizing the problem but are actively establishing and enforcing monetary consequences for attorneys who fail to adequately verify AI-generated content. The message is unequivocal: relying solely on AI without independent human verification is professionally negligent and financially risky.

Recommendation: Navigate AI with Extreme Caution

For any professional, especially those in high-stakes fields like law, the lesson from Ghiorso's historic fine is clear: treat generative AI tools as powerful assistants for drafting and ideation, but never as definitive sources of truth or reliable fact-checkers.

Our recommendation is to approach AI integration with extreme caution and unwavering skepticism.

- Verify Everything, Always: Consider any information generated by AI as a starting point that requires independent, manual verification against authoritative sources. For legal citations, this means checking official reporters, statutes, and case law databases directly.

- Understand AI's Limitations: Acknowledge that generative AI hallucinates. It is designed to generate plausible output, not necessarily accurate output. Do not assume it can fact-check itself or prevent errors simply because you've instructed it to do so.

- Maintain Professional Due Diligence: The responsibility for the accuracy and truthfulness of any document submitted to a court rests squarely with the attorney. AI tools do not absolve professionals of their ethical and professional duties.

- Invest in Training and Protocols: If integrating AI into your practice, develop clear internal protocols that mandate strict human oversight and verification steps for all AI-generated content. Educate staff on the risks and limitations of these tools.

- Stay Updated: The capabilities and limitations of AI are constantly evolving. Stay informed about best practices and any emerging guidelines or court rulings regarding AI use in your profession.

Failing to adhere to these principles can lead to severe financial penalties, reputational damage, and breaches of professional ethics. The convenience offered by AI simply does not outweigh the fundamental requirement for accuracy and truthfulness in professional work.

FAQ

Q: Can AI reliably generate legal citations or factual information?

A: No, generative AI tools frequently "hallucinate" or make up information, including legal citations and quotes that appear plausible but are entirely fabricated. They are not reliable for factual verification and must not be used as a sole source for critical information.

Q: What are the consequences for attorneys who submit AI-generated fake content?

A: Consequences can range from warnings to significant monetary fines, as evidenced by cases in Oregon and New Orleans. Courts view the submission of unverified, fabricated content as a serious breach of an attorney's duties of professionalism, truthfulness, and candor to the court, potentially leading to financial penalties and reputational damage.

Q: Is it enough to just ask the AI if its information is real?

A: No, the incident in Oregon highlights that even AI-powered search engines can affirm fabricated cases as real when queried. Generative AI cannot reliably fact-check its own output. Independent verification through authoritative, traditional sources is always necessary to ensure accuracy and avoid severe professional repercussions.

Related articles

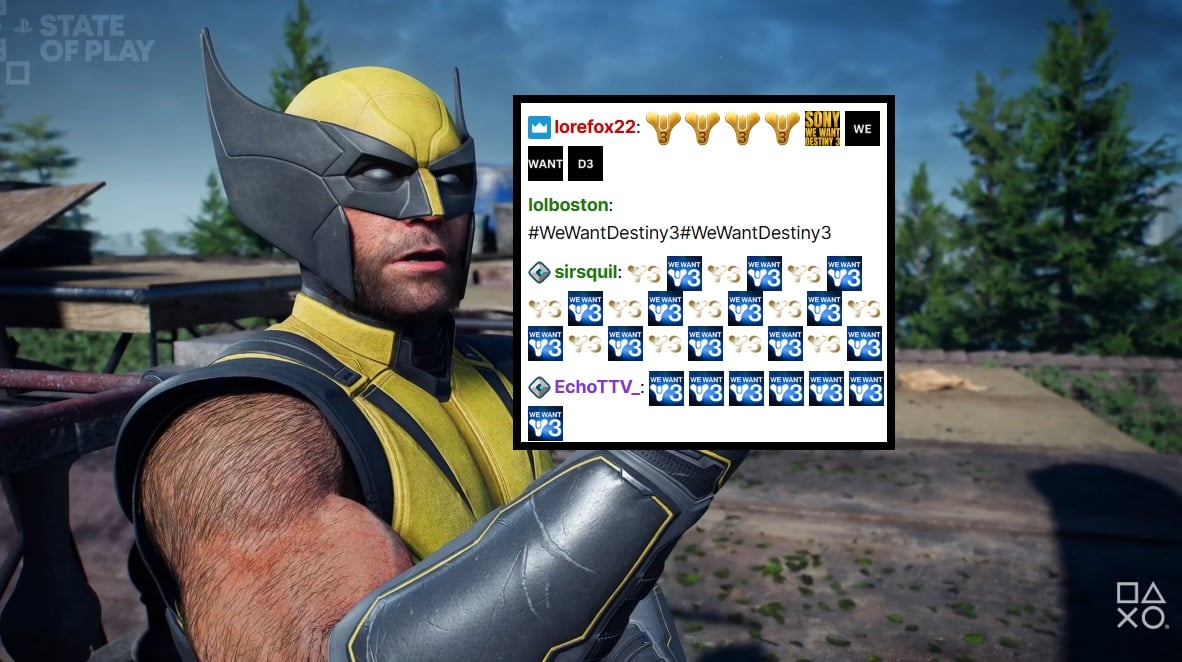

PlayStation Showcase Chat Swamped by Demands for Destiny 3

PlayStation's recent State of Play showcase was largely overshadowed by an impassioned fan campaign in the Twitch chat, demanding 'Destiny 3'. Amidst reveals for new PS5 games, the chat was relentlessly spammed with #WeWantDestiny3, fueled by the unexpected sunsetting of Destiny 2 and the reported absence of a direct sequel. This digital protest reflects widespread community frustration, amplified by a popular streamer and a petition with over 330,000 signatures.

Microsoft Unveils ASSERT, Simplifying AI Behavior Testing with Text

Microsoft has launched ASSERT, an open-source framework designed to simplify AI behavior testing. It enables developers to create comprehensive, application-specific evaluations using natural language descriptions, ensuring AI systems act as intended for particular products and services. The tool translates high-level goals into structured tests, generates scenarios, scores results, and logs execution paths.

Trump Orders Voluntary AI Model Review Before Release

President Trump has signed an executive order creating a voluntary framework for AI companies to share advanced models with the federal government before release. This initiative aims to bolster secure innovation and protect critical infrastructure, reflecting a shift from the administration's previous hands-off approach to AI safety. Companies opting for pre-release review may receive confidentiality protections.

Quick Share Meets AirDrop: A Welcome Cross-Platform Step

Quick Verdict: A Much-Anticipated Bridge For years, seamless file sharing between Android and iOS devices has been a frustrating chasm, often requiring clunky workarounds or third-party apps. This month, Google is

Blue Origin's New Glenn Explosion: Key Components Survive, 2026

Blue Origin announced that critical fuel tanks and key launch pad components survived last week's New Glenn rocket explosion, paving a faster path back to flight. CEO Dave Limp pledges a return to orbital missions before year-end, which is crucial for NASA's Artemis lunar program to maintain its tight schedule for crewed landings.

Amazon Music Prime: A Troubling Tune for Subscribers

Quick Verdict Amazon Music Prime, long considered an ad-free perk of a Prime membership, is seeing ads introduced for subscribers in India, with reports suggesting similar changes elsewhere. While US users are currently