AI for Medical Advice: Proceed with Caution

Quick Verdict: Proceed with Caution Artificial intelligence offers unparalleled convenience and accessibility when seeking information about your health. For general wellness guidance, AI tools can be remarkably

Quick Verdict: Proceed with Caution

Artificial intelligence offers unparalleled convenience and accessibility when seeking information about your health. For general wellness guidance, AI tools can be remarkably helpful, acting as a quick resource for everything from meal planning to workout regimens. However, when it comes to personal medical history, diagnosis, or treatment, the consensus from medical professionals is clear: AI is a springboard for information, not a substitute for a doctor. Its inability to truly understand nuanced medical context, coupled with a documented propensity for errors, means that while AI can enhance administrative tasks for healthcare providers, consumers must approach its diagnostic capabilities with extreme skepticism to avoid serious health risks.

The Rise of AI in Healthcare: A Double-Edged Scalpel

The digital age has ushered in an era where health advice is readily available, often irrespective of its credibility. This proliferation of information coincides with a concerning trend: historically low levels of public trust in the traditional healthcare system. Recent polls indicate a 5-7% decrease in trust for federal health agencies like the CDC and FDA over the past year alone. Capitalizing on this shifting landscape, the tech world is rapidly developing AI-driven medical alternatives that are free, always available, and quick to use.

The public's embrace of this technology is significant, with a survey revealing that 63% of respondents find AI-generated health information reliable. Major AI developers, including Google, OpenAI, and Anthropic, have already launched health-oriented large language models (LLMs) primarily for healthcare professionals. Microsoft has gone further with Copilot Health, a secure medical AI tool that integrates health records, wearable data, and medical history. Rumors even suggest Apple is developing its own health AI, while Oura has launched an experimental women's health LLM.

For family physician Dr. Alexa Mieses Malchuk, this technological shift has profoundly altered patient interactions and her practice. While AI can provide exhaustive answers to health queries, it also has a significant capacity to be wrong. Her insights offer a critical perspective on the utility and inherent dangers of relying on AI for personal medical advice.

Behind the Screens: What Health AI Can (and Can't) Do

AI's role in healthcare is bifurcated, serving distinct purposes for professionals and the general public.

For Healthcare Professionals: Dr. Mieses Malchuk herself is not averse to AI; she leverages it to streamline administrative burdens that often consume doctors' valuable time. AI excels at tasks like triaging patient messages, generating anticipatory guidance before appointments, and assisting with scheduling, clinical documentation, and medical coding. Companies like Amazon and Google are actively building software to support these functions, aiming to reduce the paperwork burden that often keeps doctors from direct patient care. These applications represent a significant leap in operational efficiency within the healthcare system, allowing physicians to focus more on patient interaction.

For Consumers: The allure of consumer-facing health AI lies in its accessibility and speed. It can provide immediate answers to myriad health questions, offering comprehensive explanations. For those seeking general wellness guidance, AI can be a powerful ally. For instance, if a patient is newly diagnosed with celiac disease, AI can generate custom meal plans, suggest suitable foods, and provide helpful dietary recommendations. Similarly, it's adept at creating customized workout regimens. In these areas, where general information and planning are key, AI serves as an excellent wellness tool for individuals without medical training.

The Doctor's Perspective: A Springboard, Not a Solution

Despite the undeniable convenience, Dr. Mieses Malchuk strongly advises medical non-professionals to use AI as a "springboard" for information, rather than the "end-all, be-all" source of medical advice. While the instant gratification and perceived certainty offered by AI chatbots can be comforting, she warns that these tools are fundamentally incapable of diagnosing conditions. The core issue, she notes, is that a chatbot's responses are only as good as the questions asked, and users often inadvertently omit crucial medical information, which could drastically alter a potential diagnosis or treatment plan.

She emphasizes that access to AI shouldn't lead individuals away from their primary care physicians but rather encourage a partnership where doctors help patients "sift through what they're finding online." An emerging concern for Dr. Mieses Malchuk is that patients are becoming less willing to disclose their AI-based research, yet arrive with a heightened, often misplaced, certainty about their self-diagnoses. This creates a challenging dynamic, as "even in medicine, there's not always 100% certainty about anything."

The Alarming Downsides: When AI Gets It Wrong

The most significant concern surrounding consumer-facing health AI is its potential for error and the false sense of security it can instill. Dr. Mieses Malchuk fears that tools like ChatGPT could lead people to believe they don't need to consult a doctor, potentially resulting in "a missed opportunity to diagnose something early." The consequences of such errors can be dire.

A recent study published in Nature revealed a stark reality: ChatGPT undertriaged over half of critical cases, advising patients towards a 24-48-hour evaluation instead of directing them to an emergency department. The study's authors highlighted "missed high-risk emergencies and inconsistent activation of crisis safeguards," raising serious safety concerns that demand rigorous validation before widespread deployment of AI triage systems for consumers. This underscores the critical limitation: AI cannot replace the diagnostic precision, contextual understanding, and ethical responsibility of a human medical professional.

AI vs. Human Expertise: A Necessary Partnership

The distinction between AI and human medical expertise is profound. AI excels at data processing and pattern recognition within defined parameters. It can efficiently compile information and generate responses based on vast datasets. However, a doctor provides holistic care, taking into account a patient's entire medical history, current symptoms, lifestyle, emotional state, and personal context. They possess the nuanced judgment to interpret subtle cues, conduct physical examinations, order appropriate tests, and adapt treatments based on individual responses – all while upholding an oath to "first do no harm."

AI, in its current form, cannot replicate this comprehensive, compassionate, and ethically grounded approach. It lacks the ability to truly understand the subjective experience of illness or the complex interplay of factors that constitute a patient's health. Therefore, rather than a competition, the most effective approach is a partnership: using AI for its strengths (general information, administrative efficiency) while reserving critical diagnosis and treatment decisions for qualified medical professionals. Mistrust in the medical system is indeed growing, but relying solely on AI, which lacks accountability and true understanding, is an "unfortunate step point" that risks patient safety.

The Verdict: Trusting AI with Your Health – A Measured Approach

Based on expert medical opinion and current capabilities, trusting AI with your comprehensive medical history and expecting it to provide accurate diagnoses or treatment plans is a risky endeavor. While AI tools are invaluable for administrative streamlining in healthcare and can be excellent resources for general wellness advice and planning (like diet and exercise regimens), their limitations are significant and potentially dangerous when it comes to personal health concerns.

For complex symptoms, chronic conditions, or any situation requiring a definitive diagnosis or a personalized treatment strategy, the only reliable course of action is to consult a licensed medical professional. Use AI to inform yourself and to formulate questions for your doctor, but never allow it to be the sole determinant of your health decisions. Your well-being depends on a human touch.

FAQ

Q: Is AI-generated health information reliable?

A: While 63% of respondents find it reliable, medical professionals warn it can get many things wrong, cannot diagnose, and its responses are only as good as the input. Use with extreme caution and always verify with a doctor.

Q: Can AI diagnose medical conditions or recommend treatments?

A: No, AI tools cannot diagnose conditions or provide definitive treatment plans. They lack the nuanced understanding of a human doctor, risk providing inaccurate information (like undertriaging emergencies), and could lead to missed opportunities for early diagnosis.

Q: How should I use AI for health advice, according to medical experts?

A: Experts recommend using AI as a "springboard" for general wellness advice (e.g., meal plans for specific diets, workout regimens) and for administrative tasks. For any personal medical concerns, diagnosis, or treatment, always partner with your primary care physician to sift through what you're finding online.

Related articles

BMW iX3 Flow Edition: A Colorful Leap for Automotive Personalization

Quick Verdict BMW's iX3 Flow Edition, unveiled at the Beijing Auto Show 2026, isn't just another electric SUV; it's a groundbreaking statement piece that brings E Ink's color-changing Prism technology to a production

Sony PlayStation Age Verification: A Necessary Step, Details Pending

Sony's new age verification for PlayStation in the UK and Ireland is a necessary step for child safety and compliance. However, details on implementation, user experience, and privacy are crucial and currently lacking.

Polymarket: High Stakes, Higher Risks

Quick Verdict Polymarket, a prominent prediction market platform, has been thrust into the spotlight following allegations of insider trading involving a US Army soldier, Gannon Ken Van Dyke. While the platform swiftly

regional: Seattle HR leader’s candid book offers practical insights

Seattle HR leader Mikaela Kiner's new book, "The Reverb Way," offers candid insights into building a thriving business without personal sacrifice. Drawing on her experience at Microsoft, Amazon, Starbucks, and her firm Reverb, Kiner provides practical advice for founders. The book covers navigating challenges, prioritizing work-life balance, and leveraging community, with current insights on AI's impact and Reverb's recent business rebound.

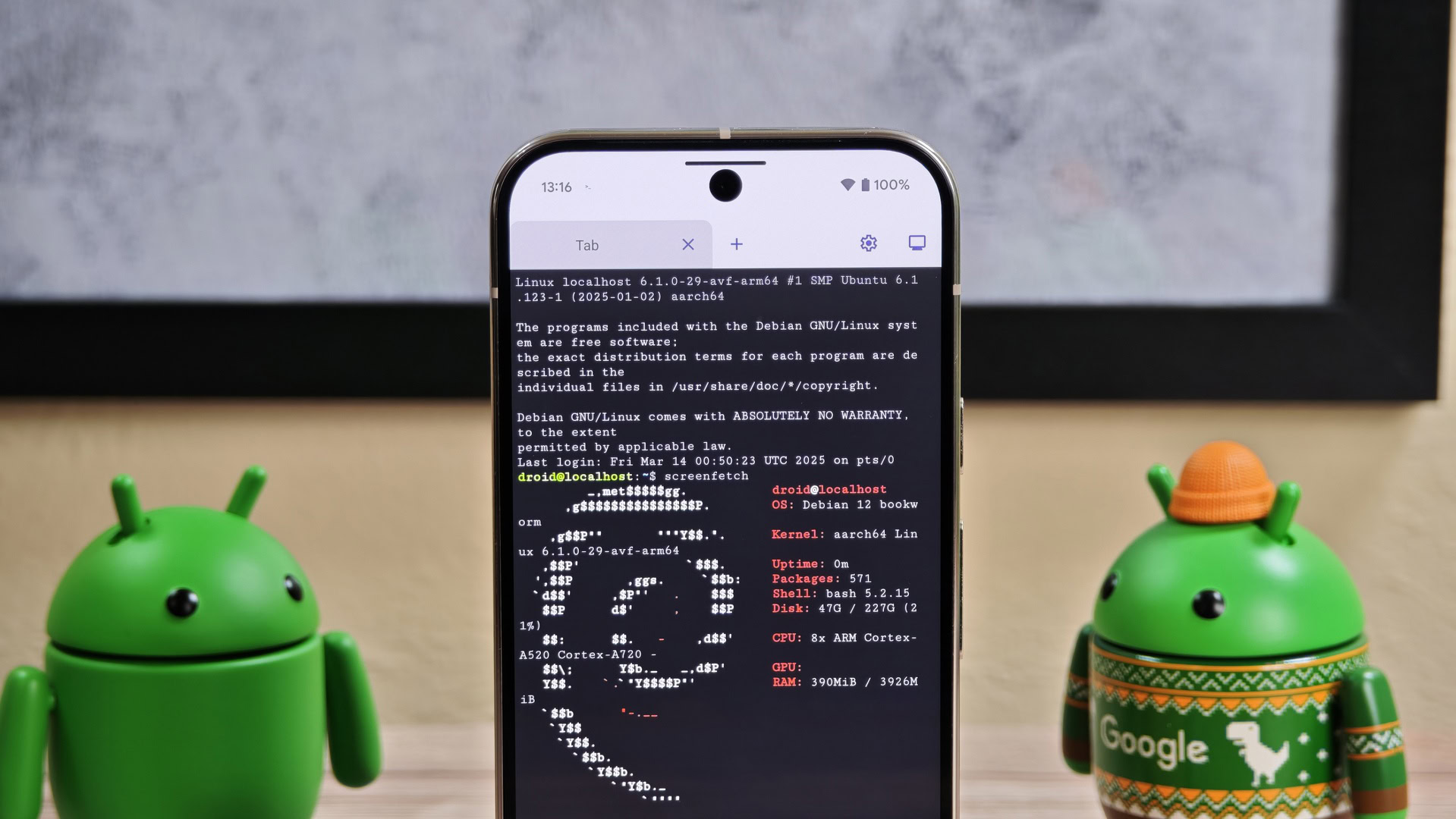

Android's Linux Terminal: Max Performance, Visual Cost – A Deep Dive

Android's built-in Linux Terminal is gaining powerful new resolution controls in Android 17, letting users sacrifice visual quality for max performance when running graphical Linux apps and games. It’s a significant upgrade for enthusiasts but comes with trade-offs.

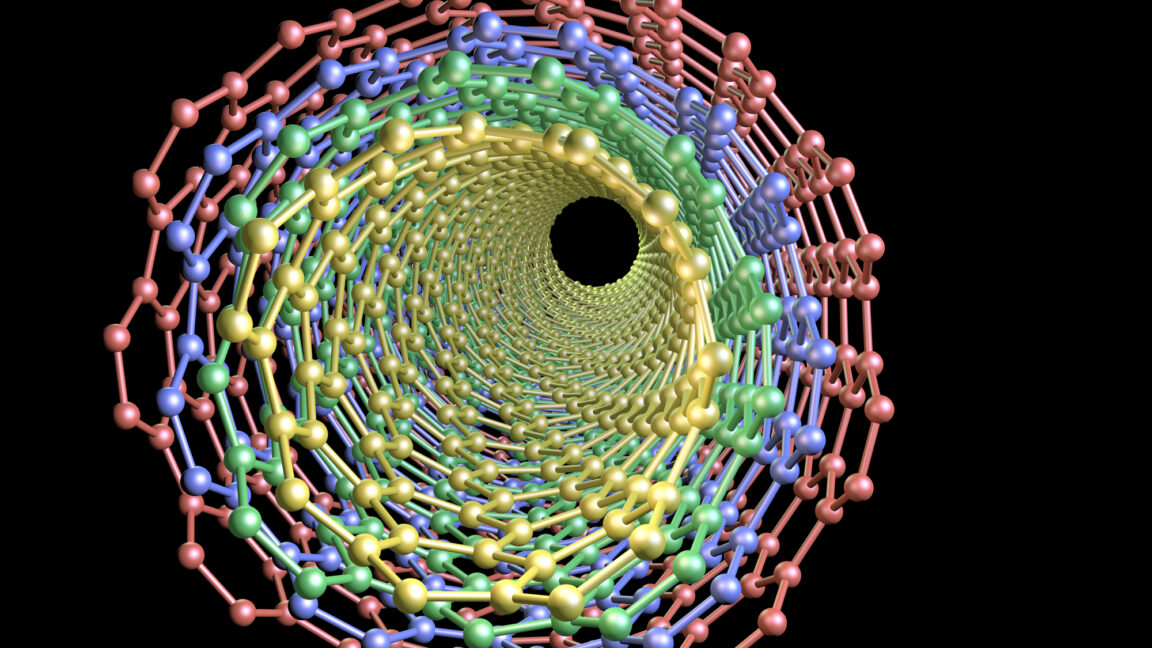

Carbon Nanotube Wiring: A Glimpse into the Future, But Not Ready for

Verdict: A Promising Scientific Breakthrough, Not a Consumer Product (Yet) Carbon nanotube (CNT) wiring has long held the promise of revolutionizing electronics, and new research brings us tantalizingly closer to that