Blocking Internet Archive: AI's Non-Solution, Web's Historical Cost

Major publishers are blocking the Internet Archive, citing concerns about AI scraping. This action, while intended to control content use by commercial AI, simultaneously erases the web's critical historical record, a resource relied upon by journalists, researchers, and courts for decades. The EFF argues that archiving and AI training for transformative purposes are often fair use, and sacrificing public digital history is a severe and irreversible mistake.

The Looming Digital Amnesia: Publishers vs. Preservation

For those of us building and interacting with the web's vast information ecosystem, a concerning trend is emerging: major publishers are actively blocking the Internet Archive. This isn't just about controlling content access; it's a profound move that threatens the historical record of the internet itself. The New York Times, for instance, has implemented technical measures that go beyond standard robots.txt directives to prevent the Archive's crawlers from accessing its site. Other significant news outlets, like The Guardian, appear to be adopting similar strategies.

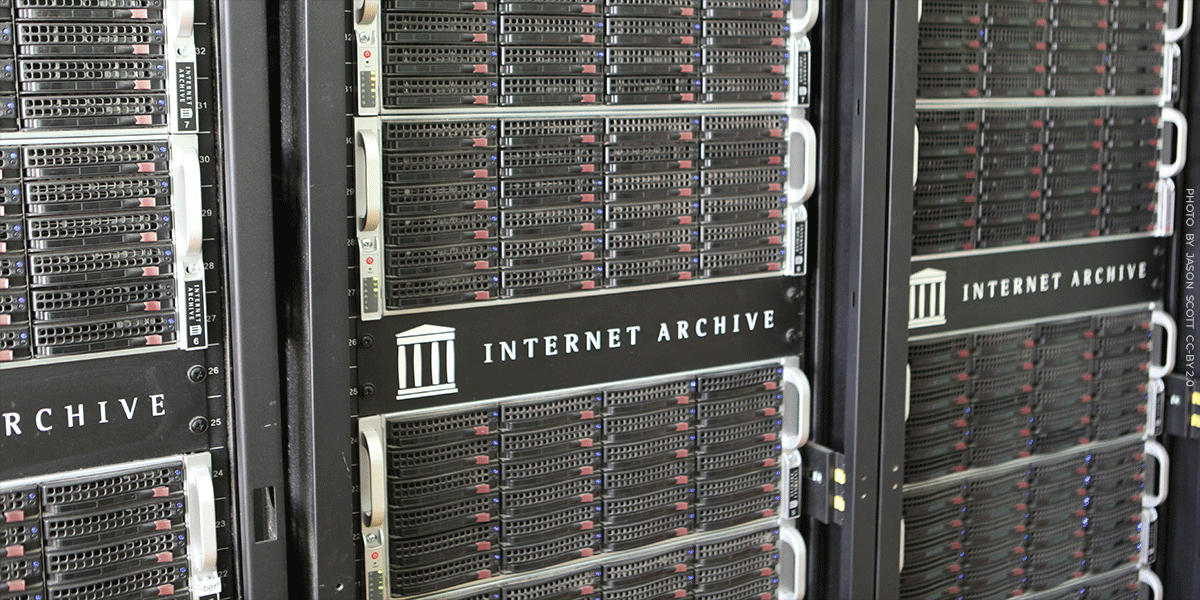

This action poses a critical challenge to the Internet Archive's foundational mission: to preserve the web and make it publicly accessible. The Archive's Wayback Machine, a digital library containing over a trillion archived web pages, is an indispensable resource. It's a daily tool for journalists verifying facts, researchers tracing information evolution, and legal professionals citing online evidence. When a significant portion of this digital landscape becomes inaccessible to the Archive, we risk losing verifiable historical context at an unprecedented scale.

The Internet Archive: Our Collective Digital Memory

Think of the Internet Archive as the world's largest digital library, meticulously collecting and indexing web content for nearly three decades. Its role extends beyond simple data storage; it acts as a crucial historical ledger for online information. In a dynamic medium where content can be edited, updated, or outright removed without notice, the Wayback Machine frequently stands as the sole reliable record of an article's original publication state. This isn't just an academic concern; consider the impact on investigative journalism, historical analysis, or even everyday fact-checking if the original source material vanishes or is subtly altered.

For developers, the Internet Archive represents a robust, publicly accessible API to the past web. It allows for analysis of trends, recovery of lost content, and validation of historical claims. Its extensive dataset, supporting millions of links on platforms like Wikipedia, underscores its critical role as a stable and authoritative reference point in the global information infrastructure. The sheer scale—trillions of pages across hundreds of languages—highlights the monumental effort in digital preservation that is now being actively impeded.

The Catalyst: AI, Scraping, and Copyright Disputes

Publishers state their primary motivation for blocking the Internet Archive is concern over AI companies scraping their content for model training. This concern is valid; the rapid proliferation of generative AI has ignited numerous legal disputes regarding the use of copyrighted material for training large language models (LLMs). Publishers, including The New York Times, are indeed pursuing litigation against AI companies, asserting that such training constitutes copyright infringement.

However, it's crucial to differentiate between commercial AI enterprises and non-profit archival institutions. The Electronic Frontier Foundation (EFF) argues that the act of training AI models on copyrighted material often falls under fair use, similar to how search engines index web content. Regardless of the eventual legal outcomes of these AI-specific lawsuits, the response of blocking an archival institution like the Internet Archive is a disproportionate and ultimately counterproductive measure. The Archive is not developing commercial AI systems; it is performing a public service of historical preservation.

The Unintended Consequence: Erasing the Web's History

The fundamental flaw in this blocking strategy is its indiscriminate nature. By denying access to the Internet Archive, publishers aren't just limiting commercial AI bots; they are effectively erasing decades of documented web history. This isn't merely a restriction on future access; it's a retroactive deletion of a shared public record. Imagine a traditional library being ordered to discard all its archived newspapers to prevent a separate, unrelated dispute with a new technology company. The damage is irreparable.

For developers, this implies a future where the historical context of online information becomes increasingly opaque. Building tools or conducting research that relies on tracing the evolution of web content will become significantly harder, if not impossible. The web, by its very nature, is ephemeral. Archives like the Wayback Machine provide much-needed persistence. Compromising this persistence sacrifices long-term public good for a short-term, likely ineffective, attempt to control a different technological challenge.

Archiving and Search: Legally Sound Principles

The legal underpinnings of archiving and making material searchable are well-established. Courts have consistently recognized that creating a searchable index often necessitates copying underlying material, and this copying serves a transformative purpose. A landmark example is the Google Books case, where courts affirmed that scanning and indexing entire books to create a searchable database constituted fair use, as it enabled discovery, research, and new insights into creative works.

This legal precedent directly applies to the Internet Archive's operations. The Archive copies web pages to enable historical search, preservation, and research—purposes that are transformative and serve the public interest. While courts may eventually refine the boundaries of fair use concerning AI training, the legal framework protecting archiving and search engines is robust. Sacrificing this established legal protection, and the invaluable public resource it enables, to address distinct disputes with commercial AI entities would be a profound and possibly irreversible error for the integrity of our digital world.

Practical Takeaways

As developers, we operate within the digital landscape and often rely on the stability and accessibility of information. The actions against the Internet Archive underscore several critical points:

- Digital Ephemerality: Online content is inherently volatile. Trusting that something published today will be accessible or unchanged tomorrow is a flawed assumption without robust archival efforts.

- Importance of Preservation: Non-profit digital libraries like the Internet Archive are crucial public infrastructure. Their role in maintaining historical context and data integrity is irreplaceable.

- Fair Use and Transformative Use: Understanding fair use principles is vital, especially as new technologies emerge. The distinction between commercial exploitation and transformative uses (like search, archiving, or even potentially AI training) is central to legal and ethical discussions.

- Impact on Data Integrity: Loss of archived web content directly impacts the ability to verify, research, and build reliable systems that depend on historical data. This isn't just a legal issue; it's a data integrity challenge.

FAQ

Q: How do publishers typically block crawlers beyond robots.txt?

A: While robots.txt is a declarative protocol for polite crawler behavior, publishers can implement more aggressive technical measures. This often involves dynamic IP blocking, user-agent string blacklisting, CAPTCHAs, or even advanced bot detection algorithms that analyze behavioral patterns characteristic of crawlers, preventing access regardless of robots.txt compliance.

Q: What is the "transformative purpose" in fair use, particularly for digital archives?

A: In the context of fair use, a "transformative purpose" means that the new work (e.g., an archived copy, a searchable index) adds new meaning, expression, or utility to the original material, rather than merely superseding it. For digital archives, making content searchable and preserving it for historical, research, and educational purposes transforms its utility from a transient publication into a stable, discoverable historical record, thereby enabling new forms of analysis and access that the original publication did not inherently provide.

Q: If content is removed from a live site, does blocking the Internet Archive erase its past archival copies?

A: No, blocking new crawls prevents the Internet Archive from creating new snapshots. It does not retroactively delete existing, previously archived copies. However, if a live page is updated or removed, and the Archive is subsequently blocked, future researchers will not have access to a current or updated archived version, and the existing archive might become the only record, unable to reflect any later changes or removals the publisher might wish to document or acknowledge publicly.

Related articles

Great Question (YC W21) Seeks Applied AI Interns: A Deep Dive

As fellow developers, we’re constantly scanning the landscape for companies pushing the boundaries, especially in the rapidly evolving AI space. Great Question, a Y Combinator W21 alumnus, has caught our eye with an

Navigating the Global AI Arena: Beyond Silicon Valley's Borders

The international AI landscape presents unique challenges and opportunities, requiring developers to think beyond traditional tech hubs. Key aspects include adapting AI models to local languages and cultures, navigating the complex global supply chain for critical hardware like semiconductors, and understanding how venture capital assesses these international ventures. Success hinges on deep local market understanding, robust technical solutions for localization, and resilience against logistical hurdles.

Engineering a Solution: Debugging Global Mosquito-Borne Diseases

As developers, we're constantly tasked with solving complex problems, whether it's optimizing a database query or architecting a distributed system. But what if the 'bug' we're trying to fix is biological, with global

Navigating the ROG Xbox Ally X20: Upgrades, Stick Drift Fix, and the

Understand the ROG Xbox Ally X20's new OLED screen and stick drift fix, and learn about its high-cost, bundle-only release strategy to make informed purchasing decisions.

Self-Host S3-Compatible Object Storage with MinIO on Staging

This guide demonstrates how to self-host an S3-compatible object store using MinIO on your staging server. By leveraging Docker Compose and Traefik for HTTPS, you can significantly reduce cloud storage costs while maintaining a production-like environment for development and testing. It covers setup, application configuration, and secure file interactions.

Unleashing LLMs: A 10-Year-Old Xeon is All You Need

This article explores how a 10-year-old Intel Xeon E5-2620 v4 server with 128 GB DDR3 RAM and no GPU can run a modern LLM like Gemma 4 26B-A4B at reading speed. It highlights that LLM inference is often memory-bound and showcases deep optimization techniques using `ik_llama.cpp`, including speculative decoding, CPU-aware MoE routing, advanced memory management, and specialized attention kernels. The success demonstrates that granular software control can unlock significant performance on older, abundant-RAM hardware.