Causal Inference for LLM Features: The Propensity Score

Every product experimentation team eventually confronts a common challenge when launching new features, especially those leveraging Large Language Models (LLMs): the 'Opt-In Trap'. Imagine shipping a new AI assistant

Every product experimentation team eventually confronts a common challenge when launching new features, especially those leveraging Large Language Models (LLMs): the 'Opt-In Trap'. Imagine shipping a new AI assistant mode. Your dashboard proudly reports that users who enable it complete 21 percentage points more tasks. While this looks fantastic, you instinctively know something's amiss.

The core problem? Users who opt into features, particularly those behind a user-controlled toggle (e.g., "Try our AI assistant," "Enable smart replies"), are rarely a random sample. Heavy-engagement users, early adopters, or those with specific intentions are far more likely to click. This self-selection creates a systematic difference between your 'treated' (opted-in) and 'control' (non-opted-in) groups before the feature even makes an impact. A naive comparison, like a simple t-test, will attribute these pre-existing differences (selection bias) to the feature's effect, leading to a wildly inflated estimate. In our synthetic dataset, a true +8 percentage point effect inflates to +21 percentage points – a 2.6x overshoot.

Why Naive Comparisons Fail for Opt-In Features

Three mechanisms commonly drive this selection bias:

- Selection on Engagement: Power users are more likely to explore new features. If your most engaged users opt-in at a high rate (e.g., 65%) while light users opt-in at a low rate (e.g., 12%), the opted-in group is inherently composed of users who were already more active and likely to complete tasks, irrespective of the new feature.

- Selection on Intent: Users opting into a feature often have an immediate, specific need. A developer enabling "code suggestions" likely has code to write and would show higher task completion even without the AI assist.

- Selection on Risk Tolerance: Early adopters are often more tolerant of initial bugs or performance issues. This means your opt-in group might be enriched with users less likely to churn due to imperfections, impacting downstream metrics.

What Propensity Scores Do

Propensity score methods are statistical tools designed to disentangle selection bias from the true causal effect. A propensity score is simply the probability that a user opts in, given their observable characteristics (e.g., engagement tier, historical behavior). By estimating this probability, we can reweight or rematch our comparison groups to make them appear balanced on these observable characteristics, much like a randomized experiment would.

This relies on three key identification assumptions:

- Unconfoundedness: All variables influencing both opt-in and the outcome are included in your propensity model.

- Overlap (Positivity): Every user has a non-zero probability of both opting in and not opting in. This ensures we have comparable users across both groups.

- No Interference (SUTVA): One user's opt-in decision doesn't affect another user's outcome.

Violating any of these can lead to biased estimates.

Practical Implementation in Python

We'll walk through a pipeline using a synthetic SaaS dataset, where the ground-truth causal effect is known to be +0.08. You'll need numpy, pandas, scikit-learn, and matplotlib. First, clone the companion repository and generate the synthetic data:

shell git clone https://github.com/RudrenduPaul/product-experimentation-causal-inference-genai-llm.git cd product-experimentation-causal-inference-genai-llm python data/generate_data.py --seed 42 --n-users 50000 --out data/synthetic_llm_logs.csv

Load the data and observe the naive effect:

python import pandas as pd df = pd.read_csv("data/synthetic_llm_logs.csv") print(df.groupby("engagement_tier").opt_in_agent_mode.mean().round(3)) naive_effect = ( df[df.opt_in_agent_mode == 1].task_completed.mean() - df[df.opt_in_agent_mode == 0].task_completed.mean() ) print(f" Naive opt-in effect: {naive_effect:+.4f}")

Expected output:

engagement_tier heavy 0.647 light 0.120 medium 0.353 Name: opt_in_agent_mode, dtype: float64 Naive opt-in effect: +0.2106

As seen, heavy users opt-in much more, and the naive effect is +0.2106, far from the true +0.08.

Step 1: Estimate the Propensity Score

We use logistic regression to predict opt_in_agent_mode based on observable characteristics. For production, include all relevant confounders.

python from sklearn.linear_model import LogisticRegression from sklearn.metrics import roc_auc_score

X = pd.get_dummies( df[["engagement_tier", "query_confidence"]], drop_first=True ).astype(float) y_treat = df.opt_in_agent_mode

ps_model = LogisticRegression(max_iter=1000).fit(X, y_treat) df["propensity"] = ps_model.predict_proba(X)[:, 1]

Sanity checks for calibration and overlap

print(df.groupby("engagement_tier").propensity.mean().round(3)) print(f"Propensity range (treated): {df[df.opt_in_agent_mode == 1].propensity.min():.3f} - {df[df.opt_in_agent_mode == 1].propensity.max():.3f}") print(f"Propensity range (control): {df[df.opt_in_agent_mode == 0].propensity.min():.3f} - {df[df.opt_in_agent_mode == 0].propensity.max():.3f}") print(f"Propensity model AUC: {roc_auc_score(y_treat, df.propensity):.3f}")

Expected output:

engagement_tier heavy 0.646 light 0.120 medium 0.353 Name: propensity, dtype: float64 Propensity range (treated): 0.114 - 0.675 Propensity range (control): 0.114 - 0.673 Propensity model AUC: 0.744

The propensities are well-calibrated (matching opt-in rates per tier), show good discrimination (AUC 0.744), and crucially, exhibit overlap between treated and control groups, fulfilling the positivity assumption.

Step 2: Inverse-Probability Weighting (IPW)

IPW reweights each user inversely to their probability of receiving their observed treatment status. This balances covariates, allowing us to estimate the Average Treatment Effect (ATE) or Average Treatment Effect on the Treated (ATT).

python import numpy as np

ATE weights: 1/P(treat) for treated, 1/P(no treat) for control

df["ipw"] = np.where( df.opt_in_agent_mode == 1, 1 / df.propensity, 1 / (1 - df.propensity) ) t = df[df.opt_in_agent_mode == 1] c = df[df.opt_in_agent_mode == 0] ate_ipw = ( (t.task_completed * t.ipw).sum() / t.ipw.sum() - (c.task_completed * c.ipw).sum() / c.ipw.sum() ) print(f"IPW average treatment effect (ATE): {ate_ipw:+.4f}")

ATT weights: 1 for treated, P(treat)/P(no treat) for control

df["ipw_att"] = np.where( df.opt_in_agent_mode == 1, 1, df.propensity / (1 - df.propensity) ) t = df[df.opt_in_agent_mode == 1] c = df[df.opt_in_agent_mode == 0] treated_mean = t.task_completed.mean() control_w_mean = (c.task_completed * c.ipw_att).sum() / c.ipw_att.sum() att_ipw = treated_mean - control_w_mean print(f"IPW average treatment effect on treated (ATT): {att_ipw:+.4f}")

Expected output:

IPW average treatment effect (ATE): +0.0851 IPW average treatment effect on treated (ATT): +0.0770

Both ATE (+0.0851) and ATT (+0.0770) are significantly closer to the true +0.08 effect than the naive +0.2106. ATE informs about population-level effects, while ATT quantifies what opt-in users gained.

Step 3: Nearest-Neighbor Matching

Matching pairs each treated user with one or more control users who have the most similar propensity scores. This directly estimates ATT.

python from sklearn.neighbors import NearestNeighbors

treated_ps = df[df.opt_in_agent_mode == 1][["propensity"]].values control_ps = df[df.opt_in_agent_mode == 0][["propensity"]].values nn = NearestNeighbors(n_neighbors=1).fit(control_ps) _, idx = nn.kneighbors(treated_ps)

treated_outcomes = df[df.opt_in_agent_mode == 1].task_completed.values matched_control_outcomes = ( df[df.opt_in_agent_mode == 0].task_completed.values[idx.flatten()] ) att_match = (treated_outcomes - matched_control_outcomes).mean() print(f"1-NN matching ATT: {att_match:+.4f}")

Expected output:

1-NN matching ATT: +0.0752

The 1-Nearest-Neighbor matching ATT (+0.0752) is also close to the ground truth. Matching with replacement (as done here) can reduce bias but potentially increase variance. For robust results, k-nearest-neighbor matching (k=3-5) with replacement is often a good default.

Step 4: Check Covariate Balance

Propensity score methods are only valid if they successfully balance the observable covariates. The Standardized Mean Difference (SMD) is the standard diagnostic. An |SMD| < 0.1 after weighting or matching indicates good balance.

python def smd(treated_vals, control_vals, treated_w=None, control_w=None): """Standardized mean difference, optionally with weights.""" if treated_w is None: treated_w = np.ones(len(treated_vals)) if control_w is None: control_w = np.ones(len(control_vals))

t_mean = np.average(treated_vals, weights=treated_w)

c_mean = np.average(control_vals, weights=control_w)

pooled_std = np.sqrt((treated_vals.var() + control_vals.var()) / 2)

return (t_mean - c_mean) / pooled_std

engagement_heavy = (df.engagement_tier == "heavy").astype(float).values qc = df.query_confidence.values tr = (df.opt_in_agent_mode == 1).values

covariates = { "engagement_tier_heavy": engagement_heavy, "query_confidence": qc, }

print(f"{'Covariate':<30} {'Raw SMD':>10} {'Weighted SMD':>15}") for name, vals in covariates.items(): smd_raw = smd(vals[tr], vals[~tr]) smd_weighted = smd( vals[tr], vals[~tr], treated_w=df[tr].ipw.values, control_w=df[~tr].ipw.values, ) print(f"{name:<30} {smd_raw:>+10.3f} {smd_weighted:>+15.3f}")

Expected output:

Covariate Raw SMD Weighted SMD engagement_tier_heavy +0.742 +0.002 query_confidence -0.032 -0.003

The raw SMD for engagement_tier_heavy is a high +0.742, indicating severe imbalance. After IPW weighting, it drops to a negligible +0.002, well below the 0.1 threshold, confirming successful balance. If SMDs remain high, your propensity model needs more features or interaction terms.

When Propensity Score Methods Fail

Propensity score methods are powerful but not foolproof. They rely heavily on the unconfoundedness assumption – that all variables driving both opt-in and the outcome are included and correctly modeled. If there's an unobserved confounder, the estimates will remain biased. Similarly, severe lack of overlap (e.g., a group of users who always opt-in and another who never do) means there are no comparable users, making causal inference impossible. In such cases, a true A/B test is the only reliable path forward.

Practical Takeaways

For LLM-based features deployed behind an opt-in toggle, naive comparisons are dangerously misleading. Propensity score methods (IPW or matching) offer a robust approach to estimate the true causal effect by balancing observable confounders. Always verify covariate balance using SMDs and remember to quantify uncertainty with confidence intervals (e.g., via bootstrapping) for a complete picture.

FAQ

Q: How do I choose between Inverse-Probability Weighting (IPW) and Matching?

A: IPW estimates the Average Treatment Effect (ATE) for the entire population if all users could have opted in, or the ATT if the control group is reweighted to match the treated. Matching typically estimates the Average Treatment Effect on the Treated (ATT), focusing on the effect for users who actually opted in. IPW can be more sensitive to extreme weights if there's poor overlap, while matching can be computationally intensive for very large datasets. The choice often depends on the specific question (population-level vs. treated-user impact) and data characteristics.

Q: What if my propensity model's AUC is low, or my SMDs are still high after weighting?

A: A low AUC (e.g., near 0.5) suggests your model isn't effectively discriminating between opt-in and non-opt-in users based on the chosen covariates. High SMDs (above 0.1) indicate that the covariates are still imbalanced. Both signal a problem with the unconfoundedness assumption. You'll need to enrich your propensity model with more relevant features, include interaction terms, or consider a more flexible model (like gradient boosting) to better capture the relationship between covariates and opt-in behavior. If balance still can't be achieved, it might imply unobserved confounders, making these methods unreliable.

Q: Can propensity scores handle situations where multiple features are opted into simultaneously?

A: Propensity score methods, in their basic form, are designed for binary treatment (opt-in vs. no opt-in for a single feature). For multiple, simultaneous, and potentially interacting treatments, you would need more advanced causal inference techniques such as generalized propensity scores for continuous treatments, or multi-valued treatment propensity scores, which become significantly more complex. For product experimentation, it's often best to isolate feature impacts where possible, or design A/B tests to measure combinations of features if interaction is expected.

Related articles

The User-First Principle: Why Your Website Isn't For You

This article highlights a common problem in web development: websites often get designed to satisfy internal stakeholders' preferences rather than serve the end-user. It argues that a website is a tool, not art, and expert design decisions based on research are frequently overruled by subjective taste, leading to suboptimal user experiences and technical challenges. The piece emphasizes a user-first approach, urging developers and stakeholders to prioritize user needs backed by data.

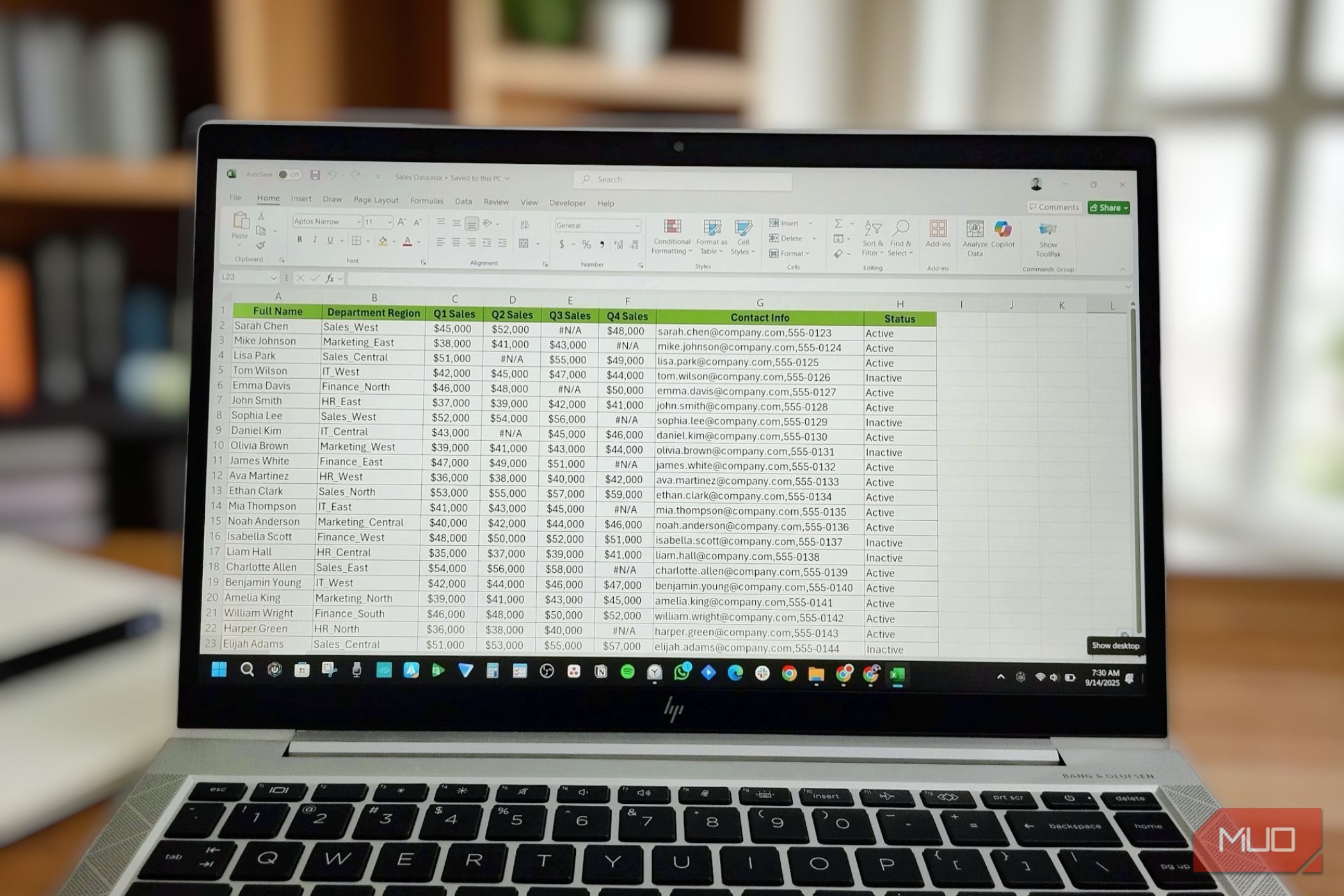

Automate Data Tasks in Excel: Faster Results Without AI Chatbots

Most people assume that artificial intelligence (AI) chatbots like ChatGPT are the quickest way to clean, sort, or analyze data. However, Excel offers a powerful suite of automation features that can handle a vast array

Stripe Launches Link: A New Digital Wallet for the AI Era

Stripe has launched Link, a new digital wallet that uniquely enables autonomous AI agents to make secure payments on behalf of users. It tackles security concerns by allowing agents to process transactions without direct access to sensitive payment credentials, utilizing virtual cards and user approval. The wallet also offers comprehensive traditional features like spending tracking and subscription management.

Motorola Moto Buds 2 Plus Review: Bose-Tuned, Feature-Packed, but

Quick Verdict Motorola’s new Moto Buds 2 Plus, retailing at $149.99, bring a compelling blend of Bose-tuned audio, robust active noise cancellation, and a suite of smart features to the US market. While the sound

AI Shifts Clean Code Economics: Why Abstraction Matters More Now

For years, the argument against introducing an interface or an abstract class in a codebase often boiled down to efficiency: "That's twice the code for the same thing." This perspective, especially prevalent in

Ubuntu Linux to Integrate AI Features Through 2026

Canonical has revealed its strategy to integrate AI features into Ubuntu Linux throughout 2026. The plan includes enhancing existing OS functions with background AI models and introducing new AI-native tools, such as advanced accessibility features and agentic AI. Canonical emphasizes model transparency and local inference, aiming to make Linux more accessible without transforming Ubuntu into an "AI product."