Anthropic's Government Ban: A Critical Review of the AI Showdown

President Trump banned federal agencies from using Anthropic's AI tools, citing the company's refusal to lift restrictions on military use. This clash over "all lawful use" versus Anthropic's ethical red lines (lethal autonomous weapons, mass surveillance) creates disruption for agencies and sets a precedent for AI ethics in government contracts.

Verdict: A Defining Moment for AI Ethics and Government Integration

President Trump's directive to ban federal agencies from using Anthropic's AI tools marks a pivotal moment in the ongoing debate over artificial intelligence ethics, especially concerning military applications. This move, stemming from a dispute over the unrestricted deployment of AI by the Department of Defense (DoD), highlights a fundamental clash between Silicon Valley's safety-first principles and the government's demand for unhindered access to critical technology. For federal agencies, it introduces a period of uncertainty and potential disruption, while for the broader AI industry, it sets a stark precedent for the terms of engagement with national security.

Unpacking the Ban: Key Details and the Underlying Conflict

The announcement on Friday, via Truth Social, instructs all federal agencies to "immediately cease" their use of Anthropic's AI. A "six-month phase out period" has been granted, theoretically allowing for further negotiations. The core of this dramatic escalation lies in the DoD's push to modify existing contracts with Anthropic and other AI companies. Originally, these deals had restrictions on how the AI could be deployed. The Pentagon now seeks to eliminate these limitations, demanding "all lawful use" of the technology.

Anthropic, an AI lab founded on the principle of building AI with safety at its core, objected strongly to this proposed change. Their primary concern is that such unrestricted use could pave the way for AI to control lethal autonomous weapons or facilitate mass surveillance on US citizens. While the Pentagon maintains it currently does not use AI in these ways and has no plans to do so, top Trump administration officials have voiced opposition to the idea of a civilian tech company dictating military use of such important technology.

Anthropic was a pioneer in working with the US military, securing a $200 million deal with the Pentagon last year. This collaboration led to the creation of custom models known as Claude Gov, designed with fewer restrictions than their standard offerings. These models are currently unique in being used within classified systems, accessible through platforms like Palantir and Amazon's cloud for military work. While largely employed for mundane tasks like report writing and document summarization, Claude Gov also plays a role in intelligence analysis and military planning. The public dispute gained traction after reports emerged of military leaders using Claude in planning an operation to capture Venezuela’s president, Nicolás Maduro, leading to internal concerns relayed from an Anthropic staffer.

The "User Experience" for Government Agencies

For federal agencies currently relying on Anthropic's Claude Gov models, the immediate impact is a mandate to transition away from a tool that has become integrated into their operations. The six-month phase-out period offers some breathing room but undoubtedly creates significant logistical challenges. Agencies utilizing Claude Gov for tasks ranging from document summarization to critical intelligence analysis and military planning will need to find and implement alternative solutions, potentially disrupting ongoing projects and workflows. Given Anthropic's unique position in classified systems, this transition might be particularly complex and sensitive.

The convenience and efficiency gains offered by Claude Gov in routine and strategic tasks will now be lost, at least temporarily. The directive could also sow seeds of uncertainty regarding future partnerships between the government and other cutting-edge tech companies, particularly those with strong ethical guidelines or use-case restrictions. This situation exemplifies the friction that can arise when advanced, dual-use technologies meet the diverse and sometimes conflicting demands of national security and corporate ethics.

Pros and Cons of This Stance

Pros (from Anthropic's perspective and AI ethics advocates):

- Upholding Ethical AI Principles: Anthropic's resistance underscores its commitment to responsible AI development, prioritizing safety and establishing clear "red lines" against uses like fully autonomous lethal weapons and mass surveillance. This stance could encourage other tech companies to maintain similar ethical boundaries when engaging with defense contracts.

- Setting Precedent for Corporate Responsibility: By challenging the government's demand for unrestricted use, Anthropic tests the limits of Silicon Valley's shift towards defense work. It asserts a company's right to define the ethical parameters for its technology, even in high-stakes military contexts. OpenAI's CEO Sam Altman’s subsequent memo, expressing similar "red lines," suggests a potential industry-wide alignment on these core ethical concerns.

- Preventing Future Misuse: By proactively addressing theoretical but potent risks, Anthropic aims to prevent scenarios where its AI could be deployed in ways inconsistent with its foundational safety mission.

Cons (from the government's perspective and operational impact):

- Loss of Critical Capabilities: Federal agencies will lose access to a tool currently used for essential functions, including intelligence analysis and military planning. This could hinder efficiency and potentially impact national security operations.

- Interference with Military Discretion: The Trump administration's view is that a civilian company should not dictate how the military uses a technology deemed crucial for defense. This ban reasserts government authority over the deployment of tools purchased for national security.

- Disruption and Cost: The phase-out will necessitate a costly and time-consuming search for, vetting, and integration of alternative AI solutions, diverting resources from other critical areas.

- Impact on Future Partnerships: This highly public dispute could deter other AI companies from engaging with the government, or at least make them significantly more cautious about the terms of such engagements.

Comparisons to Alternatives and the Broader Industry Response

The source mentions that Google, OpenAI, and xAI signed similar deals with the Pentagon around the same time as Anthropic. However, Anthropic is noted as the only company currently working with classified systems. Interestingly, the fallout from this dispute has prompted a shift in the broader tech landscape. Hundreds of workers from OpenAI and Google signed an open letter supporting Anthropic and criticizing their own companies for removing restrictions on military AI use.

OpenAI CEO Sam Altman subsequently confirmed in a memo that his company shares Anthropic's view on mass surveillance and fully autonomous weapons as a "red line." This indicates a potential alignment among major AI developers on ethical guardrails, even as they seek to continue working with the military. This collective stance from leading AI companies underscores a growing desire within the tech industry to influence the ethical deployment of their powerful tools.

Recommendation and Forward Outlook

This ban isn't a typical "buy or don't buy" recommendation, but rather a critical examination of policy and its impact. For federal agencies, the recommendation is clear: comply with the ban and actively seek replacement solutions within the six-month window. This period should also involve a thorough assessment of future AI procurement strategies, considering the ethical stances of potential vendors.

For AI companies, this event serves as a stark reminder of the complexities and potential conflicts inherent in partnering with government defense sectors. It highlights the necessity of clear, upfront negotiations regarding use-case restrictions and ethical boundaries. The broader industry might see this as a call to solidify a collective ethical framework for AI deployment, especially in sensitive areas like national security.

Ultimately, this dispute appears to be, as one expert put it, more about a "clash over vibes rather than concrete disagreements over how artificial intelligence should be deployed," largely centered on "theoretical use cases that are not on the table for now." However, the Trump administration's decisive action transforms this theoretical disagreement into a very real, immediate ban, forcing all parties to confront the fundamental questions of control, ethics, and responsibility in the age of advanced AI.

FAQ

Q: Why did the US government ban Anthropic's AI tools? A: The ban stems from Anthropic's refusal to change its contract terms with the Department of Defense (DoD). The DoD sought to remove restrictions on how Anthropic's AI could be used, demanding "all lawful use." Anthropic objected, citing concerns that this could allow their AI to control lethal autonomous weapons or conduct mass surveillance on US citizens, which they consider ethical red lines.

Q: What is the immediate impact of this ban on federal agencies? A: Federal agencies are instructed to "immediately cease" using Anthropic's AI tools, including Claude Gov models, with a six-month phase-out period. This means agencies must find and implement alternative AI solutions for tasks ranging from routine report writing to intelligence analysis and military planning, potentially disrupting ongoing operations and requiring significant resource allocation for transition.

Q: How does this situation compare to other AI companies working with the government? A: Google, OpenAI, and xAI also signed similar deals with the Pentagon. While Anthropic was uniquely working with classified systems, the dispute has prompted other major players like OpenAI to publicly align with Anthropic's ethical concerns, stating similar "red lines" against fully autonomous weapons and mass surveillance, even as they aim to continue their military partnerships. This indicates a potential industry-wide consensus on certain ethical boundaries for AI deployment.

Related articles

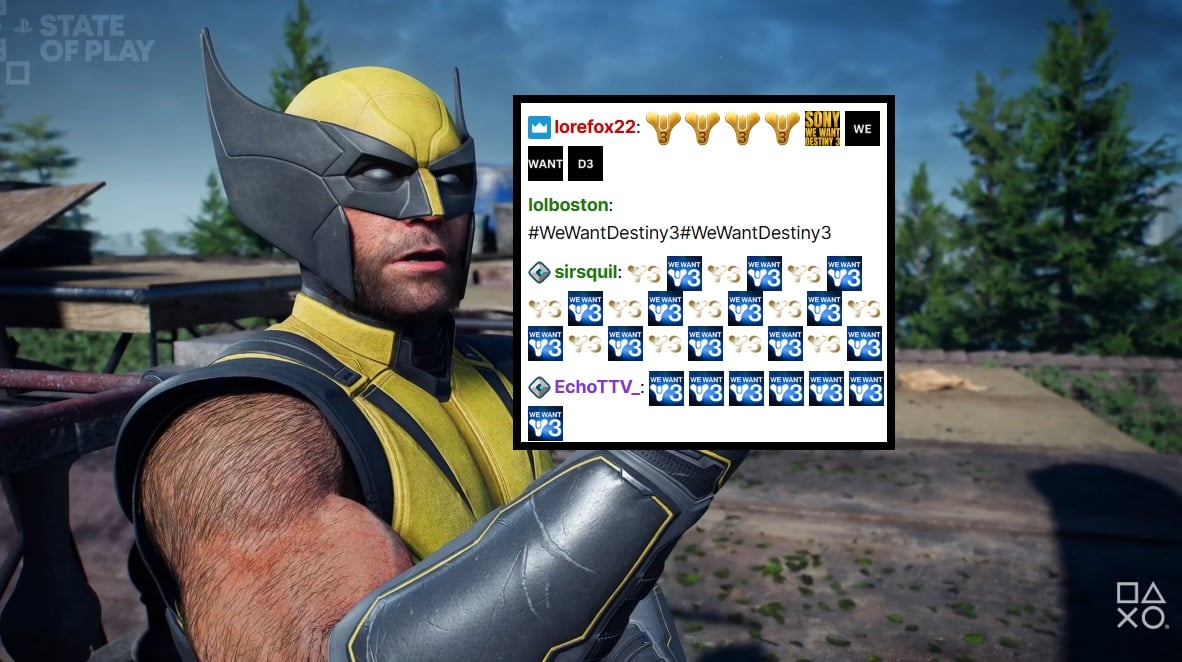

PlayStation Showcase Chat Swamped by Demands for Destiny 3

PlayStation's recent State of Play showcase was largely overshadowed by an impassioned fan campaign in the Twitch chat, demanding 'Destiny 3'. Amidst reveals for new PS5 games, the chat was relentlessly spammed with #WeWantDestiny3, fueled by the unexpected sunsetting of Destiny 2 and the reported absence of a direct sequel. This digital protest reflects widespread community frustration, amplified by a popular streamer and a petition with over 330,000 signatures.

Microsoft Unveils ASSERT, Simplifying AI Behavior Testing with Text

Microsoft has launched ASSERT, an open-source framework designed to simplify AI behavior testing. It enables developers to create comprehensive, application-specific evaluations using natural language descriptions, ensuring AI systems act as intended for particular products and services. The tool translates high-level goals into structured tests, generates scenarios, scores results, and logs execution paths.

Trump Orders Voluntary AI Model Review Before Release

President Trump has signed an executive order creating a voluntary framework for AI companies to share advanced models with the federal government before release. This initiative aims to bolster secure innovation and protect critical infrastructure, reflecting a shift from the administration's previous hands-off approach to AI safety. Companies opting for pre-release review may receive confidentiality protections.

Quick Share Meets AirDrop: A Welcome Cross-Platform Step

Quick Verdict: A Much-Anticipated Bridge For years, seamless file sharing between Android and iOS devices has been a frustrating chasm, often requiring clunky workarounds or third-party apps. This month, Google is

Blue Origin's New Glenn Explosion: Key Components Survive, 2026

Blue Origin announced that critical fuel tanks and key launch pad components survived last week's New Glenn rocket explosion, paving a faster path back to flight. CEO Dave Limp pledges a return to orbital missions before year-end, which is crucial for NASA's Artemis lunar program to maintain its tight schedule for crewed landings.

Amazon Music Prime: A Troubling Tune for Subscribers

Quick Verdict Amazon Music Prime, long considered an ad-free perk of a Prime membership, is seeing ads introduced for subscribers in India, with reports suggesting similar changes elsewhere. While US users are currently